Interpretable Deep Learning for Biodiversity Monitoring: Introducing AudioProtoPNet

Global biodiversity has sharply declined in recent decades, with North America experiencing a 29% decrease in wild bird populations since 1970. Various factors drive this loss, including land use changes, resource exploitation, pollution, climate change, and invasive species. Effective monitoring systems are crucial for combating biodiversity decline, with birds serving as key indicators of environmental health. Passive Acoustic Monitoring (PAM) has emerged as a cost-effective method for collecting bird data without disturbing habitats. While traditional PAM analysis is time-consuming, recent advancements in deep learning technology offer promising solutions for automating bird species identification from audio recordings. However, ensuring the understandability of complex algorithms to ornithologists and biologists is essential.

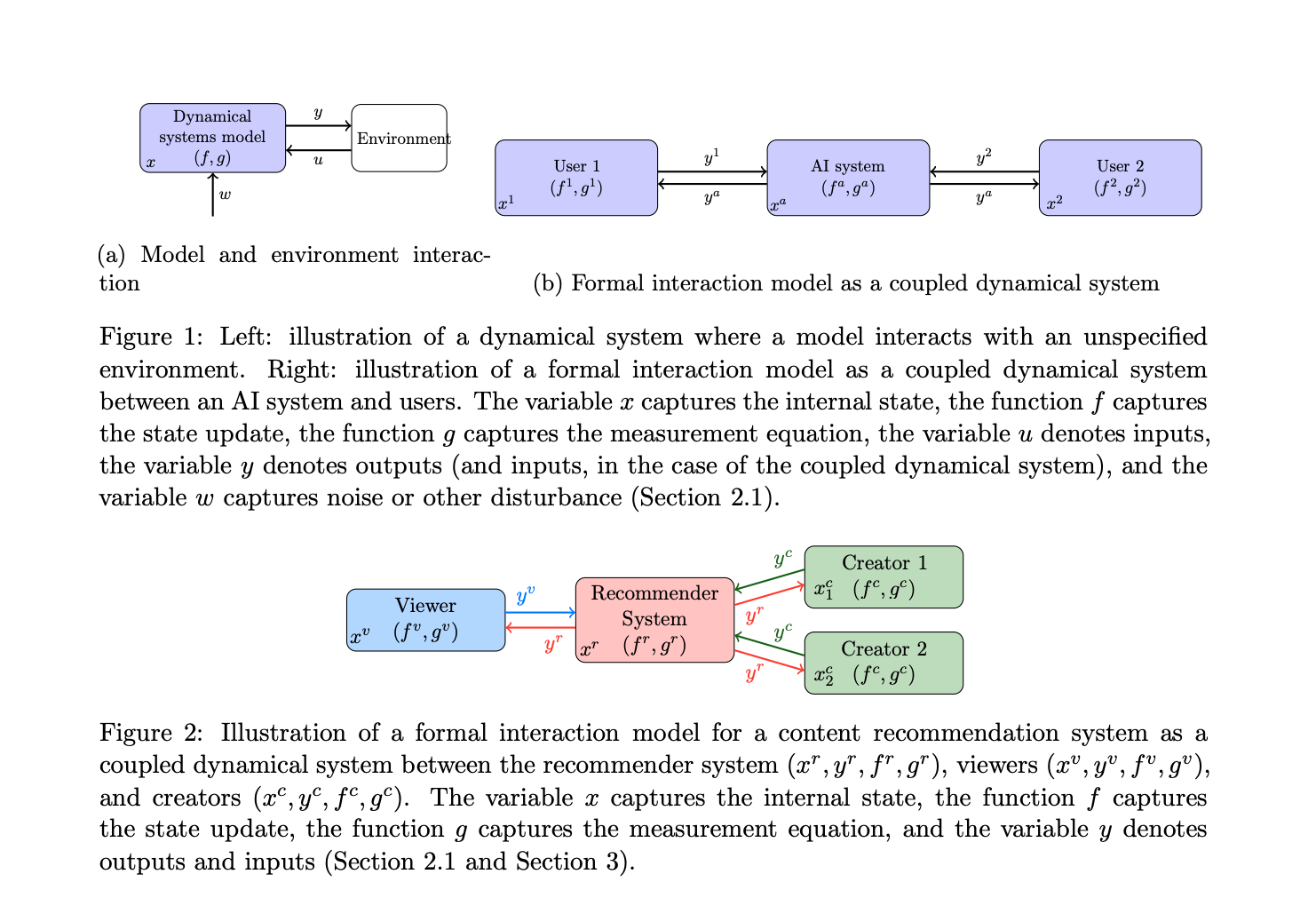

While XAI methods have been extensively explored in image and text processing, research on their application in audio data is limited. Post-hoc explanation methods like counterfactual, gradient, perturbation, and attention-based attribution methods have been studied, mainly in medical contexts. Preliminary research in interpretable deep learning for audio includes deep prototype learning, initially proposed for image classification. Advances include DeformableProtoPNet, but application to complex multi-label problems like bioacoustic bird classification remains unexplored.

Researchers from the Fraunhofer Institute for Energy Economics and Energy System Technology (IEE) and Intelligent Embedded Systems (IES), University of Kassel, present AudioProtoPNet, an adaptation of the ProtoPNet architecture tailored for complex multi-label audio classification, emphasizing inherent interpretability in its architecture. Utilizing a ConvNeXt backbone for feature extraction, the approach learns prototypical patterns for each bird species from spectrograms of training data. Classification of new data involves comparing with these prototypes in latent space, providing easily understandable explanations for the model’s decisions.

The model comprises a Convolutional Neural Network (CNN) backbone, a prototype layer, and a fully connected final layer. It extracts embeddings from input spectrograms, compares them with prototypes in latent space using cosine similarity, and utilizes a weighted loss function for training. Training occurs in two phases to optimize prototype adaptation and model synergy. Prototypes are visualized by projecting onto similar patches from training spectrograms, ensuring fidelity and meaning.

The key contributions of this research are the following:

1. Researchers developed a prototype learning model (AudioProtoPNet) for bioacoustic bird classification. This model can identify prototypical parts in the spectrograms of the training samples and use them for effective multi-label classification.

2. The model is evaluated on eight different datasets of bird sound recordings from various geographical regions. The results show that their model can learn relevant and interpretable prototypes.

3. A comparison with two state-of-the-art black-box deep learning models for bioacoustic bird classification shows that this interpretable model achieves similar performance on the eight evaluation datasets, demonstrating the applicability of interpretable models in bioacoustic monitoring.

In conclusion, This research introduces AudioProtoPNet, an interpretable model for bioacoustic bird classification, addressing the limitations of black-box approaches. Evaluation across diverse datasets demonstrates its efficacy and interpretability, showcasing its potential in biodiversity monitoring efforts.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit