CORE-Bench: A Benchmark Consisting of 270 Tasks based on 90 Scientific Papers Across Computer Science, Social Science, and Medicine with Python or R Codebases

Computational reproducibility poses a significant challenge in scientific research across various fields, including psychology, economics, medicine, and computer science. Despite the fundamental importance of reproducing results using provided data and code, recent studies have exposed severe shortcomings in this area. Researchers face numerous obstacles when replicating studies, even when code and data are available. These challenges include unspecified software library versions, differences in machine architectures and operating systems, compatibility issues between old libraries and new hardware, and inherent result variances. The problem is so pervasive that papers are often found to be irreproducible despite the availability of reproduction materials. This lack of reproducibility undermines the credibility of scientific research and hinders progress in computationally intensive fields.

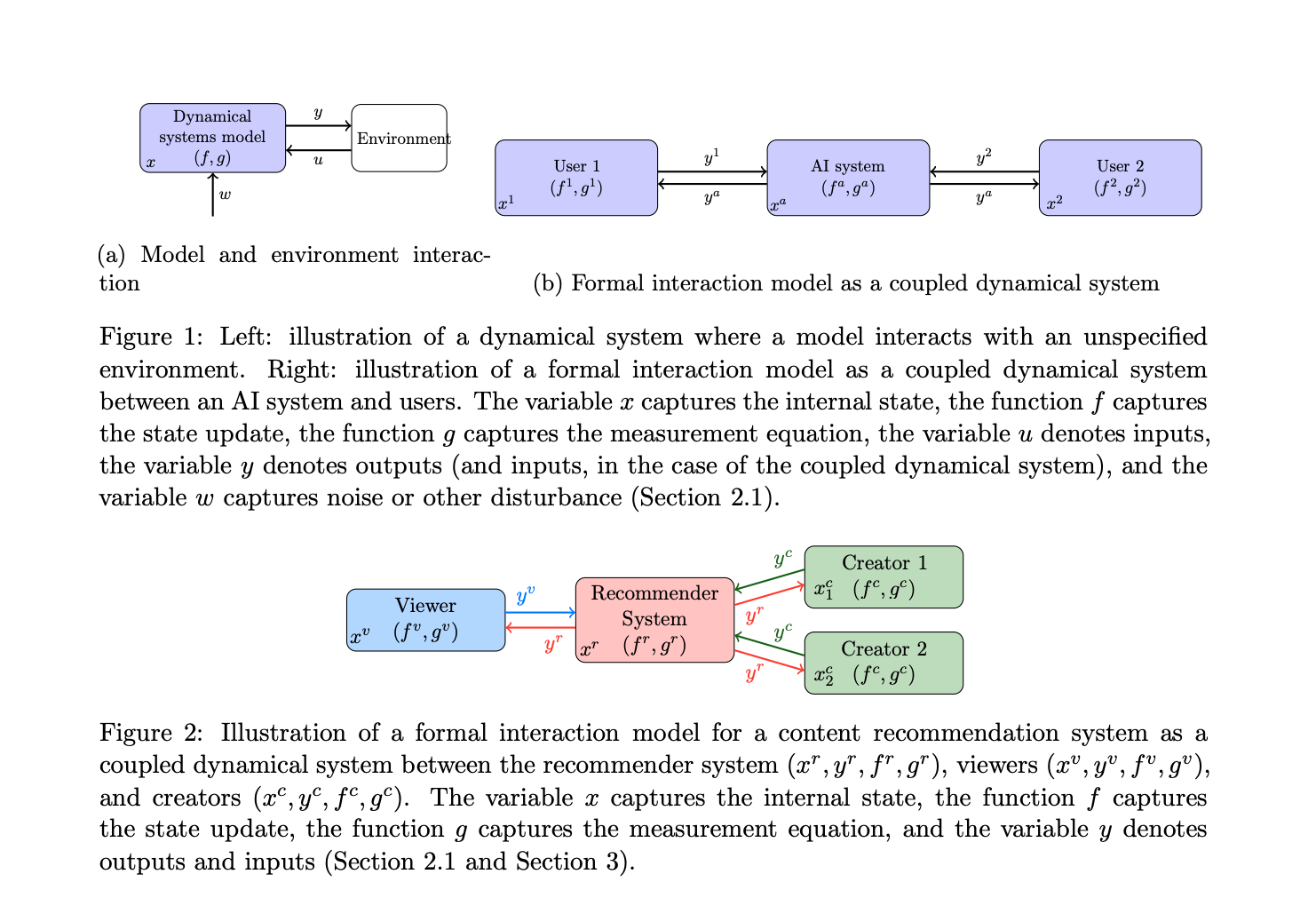

Recent advancements in AI have led to ambitious claims about agents’ ability to conduct autonomous research. However, reproducing existing research is a crucial prerequisite to conducting novel studies, especially when new research requires replicating earlier baselines for comparison. Several benchmarks have been introduced to evaluate language models and agents on tasks related to computer programming and scientific research. These include assessments for conducting machine learning experiments, research programming, scientific discovery, scientific reasoning, citation tasks, and solving real-world programming problems. Despite these advances, the critical aspect of automating research reproduction has received little attention.

Researchers from Princeton University have addressed the challenge of automating computational reproducibility in scientific research using AI agents. The researchers introduce CORE-Bench, a comprehensive benchmark comprising 270 tasks from 90 papers across computer science, social science, and medicine. CORE-Bench evaluates diverse skills, including coding, shell interaction, retrieval, and tool use, with tasks in both Python and R. The benchmark offers three difficulty levels based on available reproduction information, simulating real-world scenarios researchers might encounter. In addition to that, the study presents evaluation results for two baseline agents: AutoGPT, a generalist agent, and CORE-Agent, a task-specific version built on AutoGPT. These evaluations demonstrate the potential for adapting generalist agents to specific tasks, yielding significant performance improvements. The researchers also provide an evaluation harness designed for efficient and reproducible testing of agents on CORE-Bench, dramatically reducing evaluation time and ensuring standardized access to hardware.

CORE-Bench addresses the challenge of building a reproducibility benchmark by utilizing CodeOcean capsules, which are known to be easily reproducible. The researchers selected 90 reproducible papers from CodeOcean, splitting them into 45 for training and 45 for testing. For each paper, they manually created task questions about the outputs generated from successful reproduction, assessing an agent’s ability to execute code and retrieve results correctly.

The benchmark introduces a ladder of difficulty with three distinct levels for each paper, resulting in 270 tasks and 181 task questions. These levels differ in the amount of information provided to the agent:

1. CORE-Bench-Easy: Agents are given complete code output from a successful run, testing information extraction skills.

2. CORE-Bench-Medium: Agents receive Dockerfile and README instructions, requiring them to run Docker commands and extract information.

3. CORE-Bench-Hard: Agents only receive README instructions, necessitating library installation, dependency management, code reproduction, and information extraction.

This tiered approach allows for evaluating specific agent abilities and understanding their capabilities even when they cannot complete the most difficult tasks. The benchmark ensures task validity by including at least one question per task that cannot be solved by guessing, marking a task as correct only when all questions are answered correctly.

CORE-Bench is designed to evaluate a wide range of skills crucial for reproducing scientific research. The benchmark tasks require agents to demonstrate proficiency in understanding instructions, debugging code, information retrieval, and interpreting results across various disciplines. These skills closely mirror those needed to reproduce new research in real-world scenarios.

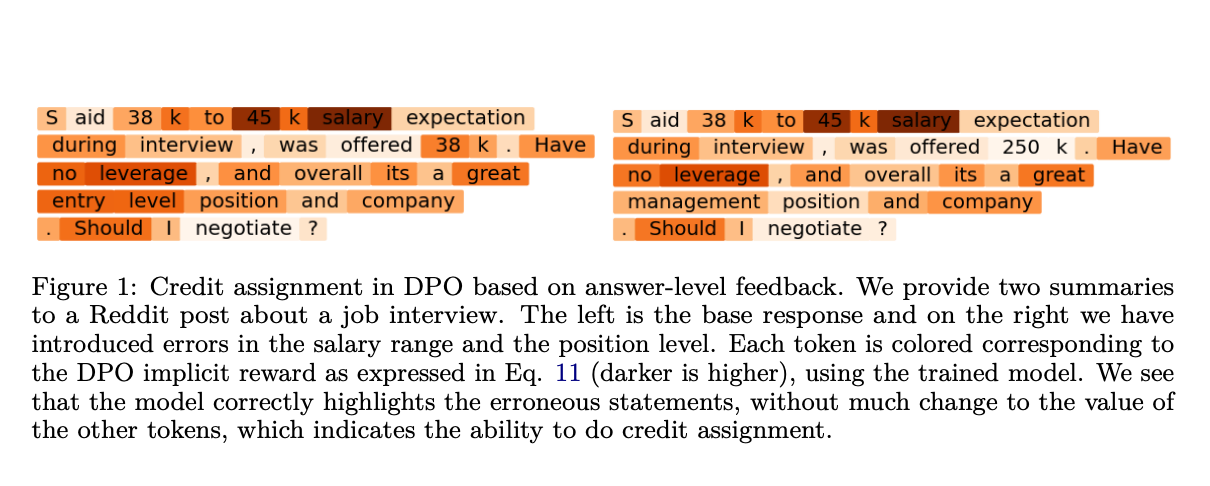

The tasks in CORE-Bench encompass both text and image-based outputs, reflecting the diverse nature of scientific results. Vision-based questions challenge agents to extract information from figures, graphs, plots, and PDF tables. For instance, an agent might need to report the correlation between specific variables in a plotted graph. Text-based questions, on the other hand, require agents to extract results from command line text, PDF content, and various formats such as HTML, markdown, or LaTeX. An example of a text-based question could be reporting the test accuracy of a neural network after a specific epoch.

This multifaceted approach ensures that the CORE-Bench comprehensively assesses an agent’s ability to handle the complex and varied outputs typical in scientific research. By incorporating both vision and text-based tasks, the benchmark provides a robust evaluation of an agent’s capacity to reproduce and interpret diverse scientific findings.

The evaluation results demonstrate the effectiveness of task-specific adaptations to generalist AI agents for computational reproducibility tasks. CORE-Agent, powered by GPT-4o, emerged as the top-performing agent across all three difficulty levels of the CORE-Bench benchmark. On CORE-Bench-Easy, it successfully solved 60.00% of tasks, while on CORE-Bench-Medium, it achieved a 57.78% success rate. However, performance dropped significantly to 21.48% on CORE-Bench-Hard, indicating the increasing complexity of tasks at this level.

This study introduces CORE-Bench to address the critical need for automating computational reproducibility in scientific research. While ambitious claims about AI agents revolutionizing research abound, the ability to reproduce existing studies remains a fundamental prerequisite. The benchmark’s baseline results reveal that task-specific modifications to general-purpose agents can significantly enhance accuracy in reproducing scientific work. However, with the best baseline agent achieving only 21% test-set accuracy, substantial room for improvement exists. CORE-Bench aims to catalyze research in enhancing agents’ capabilities for automating computational reproducibility, potentially reducing the human labor required for this essential yet time-consuming scientific activity. This benchmark represents a crucial step towards more efficient and reliable scientific research processes.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 50k+ ML SubReddit