Generative AI is already firmly anchored in science, according to survey

A global survey of nearly 3,000 researchers and clinicians reveals that AI, primarily ChatGPT, is already widely used in science. Most respondents see great potential, but also express concerns.

Scientific publisher Elsevier conducted an extensive online survey of 2,999 researchers and clinicians from 123 countries between December 2023 and February 2024. The goal was to understand perceptions, usage, and expectations of AI, especially generative AI.

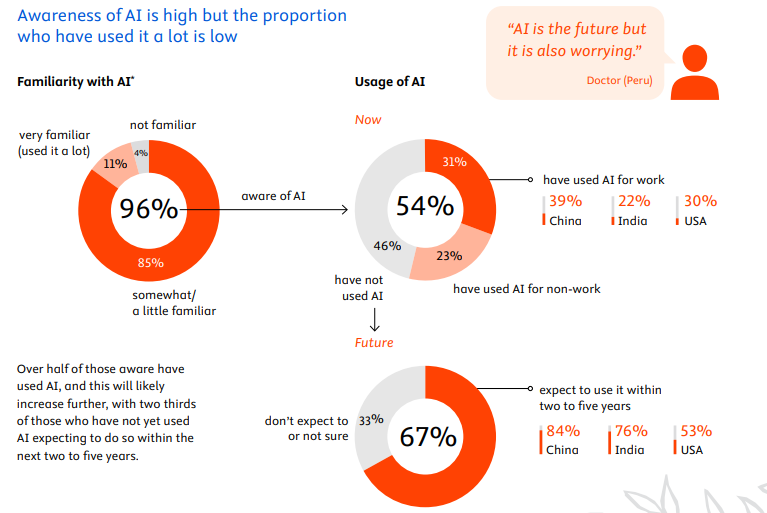

The results show that AI is already firmly established in science: 96% of respondents have heard of AI and over half (54%) have used it. Nearly a third (31%) already use AI for work. Lack of time to learn the tools is the main reason for non-use.

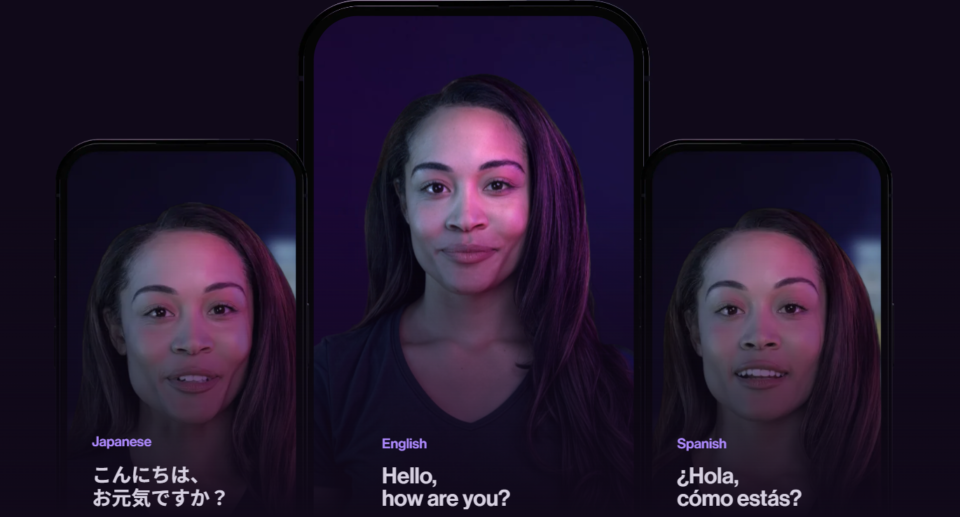

ChatGPT is by far the best-known AI product at 89% awareness. A quarter have used the chatbot professionally. Other AI tools like Bard (40% awareness) and Bing Chat (39%) lag far behind.

Ad

Usage varies by region: In China, 39% use AI for work, compared to 30% in the US and 22% in India. Researchers (37%) are more likely to use AI for work than clinicians (26%).

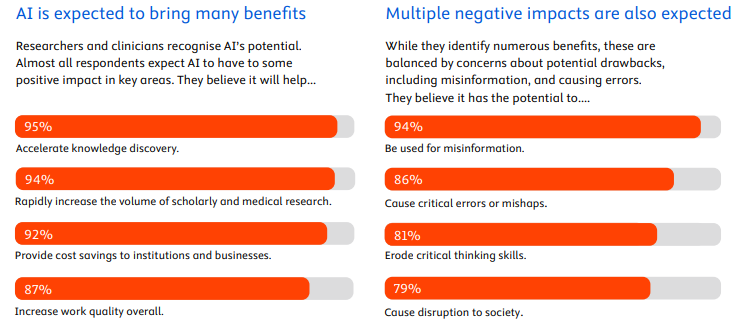

Most respondents (72%) expect AI to have a transformative or significant impact on their field. Almost all believe AI will speed up knowledge discovery (95%) and increase scientific research output (94%).

Despite positive expectations, there are concerns: 94% fear AI could be used for disinformation. 86% worry about critical errors or accidents caused by AI. 81% think AI could somewhat impair critical thinking.

The study reveals what researchers and clinicians want from AI developers: 58% say training models for accuracy, ethics, and safety would greatly increase their confidence. Using high-quality, peer-reviewed content to train models (57%) and providing references (56%) are also cited as trust-building factors.

A separate study by the Universities of Tübingen and Northwestern shows the impact of ChatGPT in scientific practice, with at least 10% of the 14 million scientific PubMed abstracts examined being influenced by AI text generators. In some countries and disciplines, such as China, South Korea, and IT, the proportion was as high as 35%. However, the researchers suspect a high number of unreported cases, as many authors revise AI-generated texts.

Recommendation